8.2 Inference for Two Independent Sample Means

Suppose we have two independent samples of quantitative data. If there is no apparent relationship between the means, our parameter of interest is the difference in means, μ1-μ2 with a point estimate of ![]() .

.

The comparison of two population means is very common. A difference between the two samples depends on both the means and their respective standard deviations. Very different means can occur by chance if there is great variation among the individual samples. In order to account for the variation, we take the difference of the sample means, and divide by the standard error in order to standardize the difference. We know that when conducting an inference for means, the sampling distribution we use (Z or t) depends on our knowledge of the population standard deviation.

Both Population Standard Deviations Known (Z)

Even though this situation is not likely since the population standard deviations are rarely known, we will begin demonstrating these ideas under the ideal circumstances. If we know both mean’s sampling distributions are normal, the sampling distribution for the difference between the means is normal and both populations must be normal. We can combine the standard errors of each sampling distribution to get a standard error of:

![]()

So the sampling distribution of ![]() assuming we know both standard deviations is approximately:

assuming we know both standard deviations is approximately:

![]()

Therefore the Z test statistic would be:

![]()

Our confidence interval would be of the form:

![]()

Where our point estimate is:

![]()

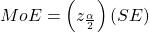

And the Margin of error is made up of:

,

, is the z critical value with area to the right equal to

is the z critical value with area to the right equal to

- and SE is

Since we rarely know one population’s standard deviation, much less two, the only situation where we might consider using this in practice is for two very large samples

Both Population Standard Deviations UnKnown (t)

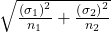

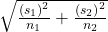

Most likely we will not know the population standard deviations, but we can estimate them using the two sample standard deviations from our independent samples. In this case we will use a t sampling distribution with standard error:

![]()

Assumptions for the Difference in Two Independent Sample Means

Recall we need to be able to assume an underlying normal distribution and no outliers or skewness in order to use the t distribution. We can relax these assumptions as our sample sizes get bigger and can typically just use the Z for very large sample sizes.

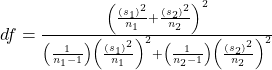

The remaining question is what do we do for degrees of freedom when comparing two groups? One method requires a somewhat complicated calculation but if you have access to a computer or calculator this isn’t an issue. We can find a precise df for two independent samples as follows:

NOTES: The df are not always a whole number, you usually want to round down. It is not necessary to compute this by hand. Find a reliable technology to do this.

Hypothesis Tests for the Difference in Two Independent Sample Means

Recall the steps to a hypothesis test never change. When our parameter of interest is μ1-μ2 we are often interested in an effect between the two groups. In order to show an effect, we will have to first assume there is no difference by stating it in the Null Hypothesis as:

Ho: μ1-μ2=0 OR Ho: μ1=μ2

Ha: μ1-μ2 (<, >, ≠) 0 OR Ho: μ1 (<, >, ≠) μ2

The t test statistic is calculated as follows:

- s1 and s2, the sample standard deviations, are estimates of σ1 and σ2, respectively.

and

and  are the sample means. μ1 and μ2 are the population means. (Note: that in the null we are typically assuming μ1-μ2=0)

are the sample means. μ1 and μ2 are the population means. (Note: that in the null we are typically assuming μ1-μ2=0)

Confidence Intervals for the Difference in Two Independent Sample Means

Once we have identified we have a difference in a hypothesis test, we may want to estimate it. Our Confidence Interval would be of the form:

![]()

Where our point estimate is:

![]()

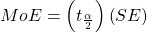

And the MoE is made up of:

,

, is the t critical value with area to the right equal to

is the t critical value with area to the right equal to

- and SE is

The occurrence of one event has no effect on the probability of the occurrence of another event

Numerical data with a mathematical context

A number that is used to represent a population characteristic and can only be calculated as the result of a census

The difference in the means of two independent populations

The value that is calculated from a sample used to estimate an unknown population parameter

The standard deviation of a sampling distribution

The facet of statistics dealing with using a sample to generalize (or infer) about the population

The probability distribution of a statistic at a given sample size

A measure of how far what you observed is from the hypothesized (or claimed) value

An interval built around a point estimate for an unknown population parameter

An observation that stands out from the rest of the data significantly

The number of objects in a sample that are free to vary