7.1 Inference for Two Dependent Samples (Matched Pairs)

Learning Objectives

By the end of this chapter, the student should be able to:

- Classify hypothesis tests by type

- Conduct and interpret hypothesis tests for two population means with known population standard deviations

- Conduct and interpret hypothesis tests for two population means with unknown population standard deviations

- Conduct and interpret hypothesis tests for matched or paired samples

- Conduct and interpret hypothesis tests for two population proportions

Studies often compare two groups. For example, maybe researchers are interested in the effect aspirin has in preventing heart attacks. One group is given aspirin and the other a placebo that has no effect, and the groups’ heart attack rates are studied over several years. Other studies may compare various diet and exercise programs. Politicians compare the proportion of individuals from different income brackets who might vote for them. Students are interested in whether SAT or GRE preparatory courses really help raise their scores.

You have learned to conduct inference on single means and single proportions. We know that the first step is deciding the type of data with which we are working. For quantitative data, we are focused on means, while for categorical data, we are focused on proportions. In this chapter, we will compare two means or two proportions. The general procedure is still the same, just expanded. With two-sample analysis, it is good to know what the formulas look like and where they originate; however, you will probably lean heavily on technology in preforming the calculations.

In comparing two means, we are obviously working with two groups, but first we need to think about the relationship between them. The groups are classified either as independent or dependent. Independent samples consist of two samples that have no relationship—that is, sample values selected from one population are not related in any way to sample values selected from the other population. Dependent samples consist of two groups that have some sort of identifiable relationship.

Two Dependent Samples (Matched Pairs)

Two samples that are dependent typically come from a matched pairs experimental design. The parameter tested using matched pairs is the population mean difference. When using inference techniques for matched or paired samples, the following characteristics should be present:

- Simple random sampling is used.

- Sample sizes are often small.

- Two measurements (samples) are drawn from the same (or extremely similar) two individuals or objects.

- Differences are calculated from the matched or paired samples.

- The differences form the sample that is used for analysis.

To perform statistical inference techniques, we first need to know about the sampling distribution of our parameter of interest. Remember that, although we start with two samples, the differences are the data in which we are interested, and our parameter of interest is μd, the mean difference. Our point estimate is ![]() . In a perfect world, we could assume that both samples come from a normal distribution; therefore, the differences in those normal distributions are also normal. However, in order to use Z, we must know the population standard deviation, which is near impossible for a difference distribution. In addition, it is very hard to find large numbers of matched pairs, so the sampling distribution we typically use for

. In a perfect world, we could assume that both samples come from a normal distribution; therefore, the differences in those normal distributions are also normal. However, in order to use Z, we must know the population standard deviation, which is near impossible for a difference distribution. In addition, it is very hard to find large numbers of matched pairs, so the sampling distribution we typically use for ![]() is a t-distribution with n – 1 degrees of freedom, where n is the number of differences.

is a t-distribution with n – 1 degrees of freedom, where n is the number of differences.

Confidence intervals may be calculated on their own for two samples, but often, we first want to conduct a hypothesis test to formally check if a difference exists, especially in the case of matched pairs. If we do find a statistically significant difference, then we may estimate it with a CI after the fact.

Hypothesis Tests for the Mean Difference

In a hypothesis test for matched or paired samples, subjects are matched in pairs and differences are calculated, and the population mean difference, μd, is our parameter of interest. Although it is possible to test for a certain magnitude of effect, we are most often just looking for a general effect. Our hypothesis would then look like:

- Ho: μd = 0

- Ha: μd (<, >, ≠) 0

The steps are familiar to us by now, but it is tested using a Student’s t-test for a single population mean with n – 1 degrees of freedom, with the test statistic:

t = ![]()

Example

A study was conducted to investigate the effectiveness of hypnotism in reducing pain. Results for randomly selected subjects are shown in the figure below. A lower score indicates less pain. The “before” value is matched to an “after” value, and the differences are calculated. The differences have a normal distribution. Are the sensory measurements, on average, lower after hypnotism? Test at a 5% significance level.

| Subject | A | B | C | D | E | F | G | H |

|---|---|---|---|---|---|---|---|---|

| Before | 6.6 | 6.5 | 9.0 | 10.3 | 11.3 | 8.1 | 6.3 | 11.6 |

| After | 6.8 | 2.4 | 7.4 | 8.5 | 8.1 | 6.1 | 3.4 | 2.0 |

Figure 7.2: Reported pain data

Solution

Corresponding “before” and “after” values form matched pairs. (Calculate “after” – “before.”)

| After data | Before data | Difference |

|---|---|---|

| 6.8 | 6.6 | 0.2 |

| 2.4 | 6.5 | -4.1 |

| 7.4 | 9 | -1.6 |

| 8.5 | 10.3 | -1.8 |

| 8.1 | 11.3 | -3.2 |

| 6.1 | 8.1 | -2 |

| 3.4 | 6.3 | -2.9 |

| 2 | 11.6 | -9.6 |

Figure 7.3: Differences

The data for the test are the differences: {0.2, –4.1, –1.6, –1.8, –3.2, –2, –2.9, –9.6}.

The sample mean and sample standard deviation of the differences are: ![]() = –3.13 and sd = 2.91. Verify these values.

= –3.13 and sd = 2.91. Verify these values.

Let μd be the population mean for the differences. We use the subscript d to denote “differences.”

Random variable: ![]() = the mean difference of the sensory measurements.

= the mean difference of the sensory measurements.

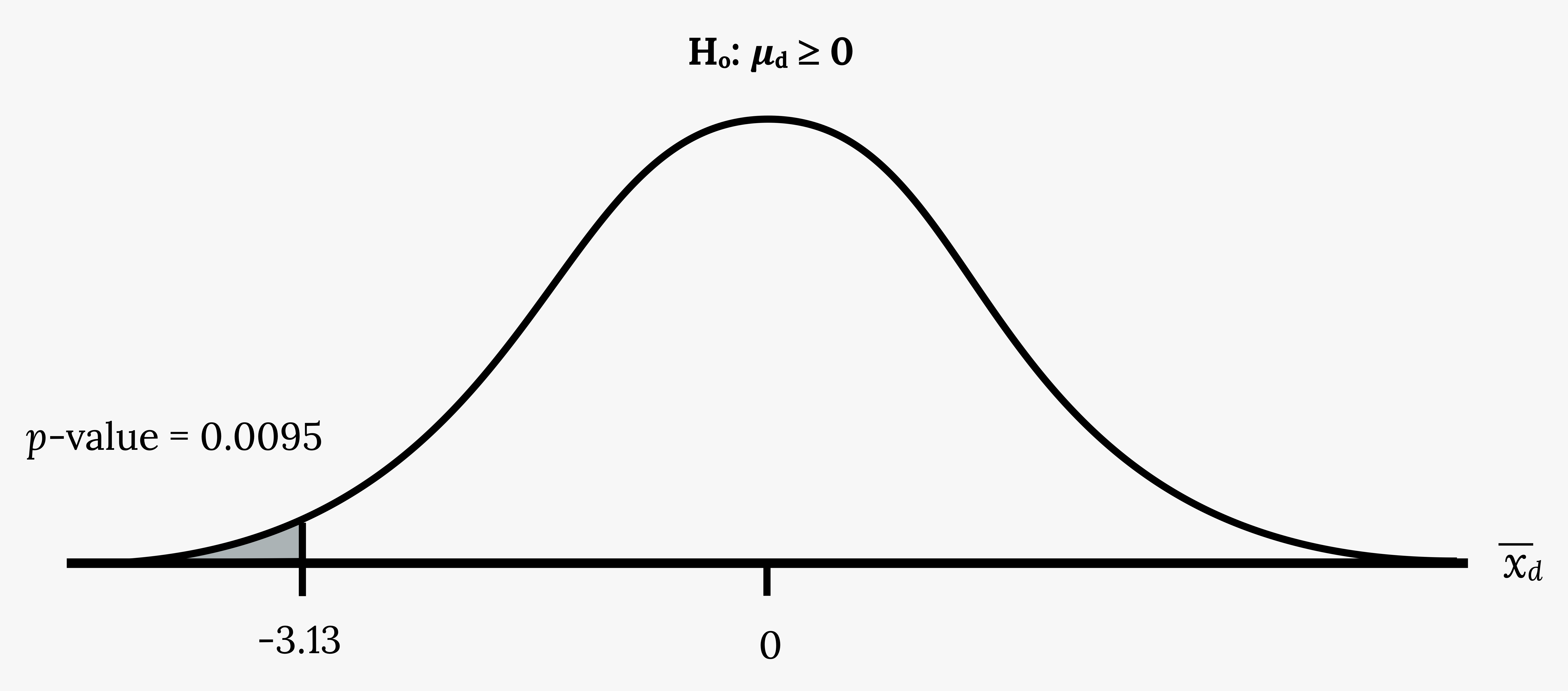

H0: μd ≥ 0

The null hypothesis is zero or positive, meaning that there is the same or more pain felt after hypnotism. That means the subject shows no improvement. μd is the population mean of the differences.

Ha: μd < 0

The alternative hypothesis is negative, meaning there is less pain felt after hypnotism. That means the subject shows improvement. The score should be lower after hypnotism, so the difference ought to be negative to indicate improvement.

Distribution for the test:

The distribution is a Student’s t with df = n – 1 = 8 – 1 = 7. Use t7. (Notice that the test is for a single population mean.)

Calculate the p-value using the Student’s-t distribution: p-value = 0.0095

![]() is the random variable for the differences.

is the random variable for the differences.

The sample mean and sample standard deviation of the differences are:

![]() = –3.13

= –3.13

![]() = 2.91

= 2.91

Compare α and the p-value:

α = 0.05 and p-value = 0.0095, so α > p-value.

Make a decision:

Since α > p-value, reject H0. This means that μd < 0 and there is improvement.

Conclusion:

At a 5% level of significance, from the sample data, there is sufficient evidence to conclude that the sensory measurements, on average, are lower after hypnotism. Hypnotism appears to be effective in reducing pain.

Note: For the TI-83+ and TI-84 calculators, you can either calculate the differences ahead of time (after – before) and put the differences into a list or you can put the after data into a first list and the before data into a second list. Then go to a third list and arrow up to the name. Enter 1st list name – 2nd list name. The calculator will do the subtraction, and you will have the differences in the third list. Use your list of differences as the data. Press STAT and arrow over to TESTS. Press 2:T-Test. Arrow over to Data and press ENTER. Arrow down and enter 0 for μ0, the name of the list where you put the data, and 1 for Freq:. Arrow down to μ: and arrow over to < μ0. Press ENTER. Arrow down to Calculate and press ENTER. The p-value is 0.0094, and the test statistic is -3.04. Do these instructions again except, arrow to Draw (instead of Calculate). Press ENTER.

Your Turn!

A study was conducted to investigate how effective a new diet was in lowering cholesterol. Results for the randomly selected subjects are shown in the table. The differences have a normal distribution. Are the subjects’ cholesterol levels lower on average after the diet? Test at the 5% level.

| Subject | A | B | C | D | E | F | G | H | I |

|---|---|---|---|---|---|---|---|---|---|

| Before | 209 | 210 | 205 | 198 | 216 | 217 | 238 | 240 | 222 |

| After | 199 | 207 | 189 | 209 | 217 | 202 | 211 | 223 | 201 |

Figure 7.5: Cholesterol levels

Confidence Intervals for the Mean Difference

The general format of a confidence interval is (PE – MoE, PE + MoE)

The population parameter of interest is μd, the mean difference. Our point estimate is ![]() .

.

If we are using the t-distribution, the margin of error for the population mean difference is:

MoE = ![]()

is the t critical value with area to the right equal to

is the t critical value with area to the right equal to

- use df = n – 1 degrees of freedom, where n is the number of pairs

- sd = standard deviation of the differences

Example

A college football coach was interested in whether the college’s strength development class increased his players’ maximum lift (in pounds) on the bench press exercise. He asked four of his players to participate in a study. The amount of weight they could each lift was recorded before they took the strength development class. After completing the class, the amount of weight they could each lift was again measured. The data are as follows:

| Weight (in pounds) | Player 1 | Player 2 | Player 3 | Player 4 |

|---|---|---|---|---|

| Amount of weight lifted prior to the class | 205 | 241 | 338 | 368 |

| Amount of weight lifted after the class | 295 | 252 | 330 | 360 |

Figure 7.6: Weight lifted

The coach wants to know if the strength development class makes his players stronger on average.

Solution

Record the differences data. Calculate the differences by subtracting the amount of weight lifted prior to the class from the weight lifted after completing the class. The data for the differences are: {90, 11, -8, -8}. Assume the differences have a normal distribution.

Using the differences data, calculate the sample mean and the sample standard deviation

![]() = 21.3, sd = 46.7

= 21.3, sd = 46.7

NOTE: The data given here would indicate that the distribution is actually right-skewed. The difference 90 may be an extreme outlier? It is pulling the sample mean to be 21.3 (positive). The means of the other three data values are actually negative.

Using the difference data, this becomes a test of a single variable.

Define the random variable:

![]() mean difference in the maximum lift per player.

mean difference in the maximum lift per player.

The distribution for the hypothesis test is t3.

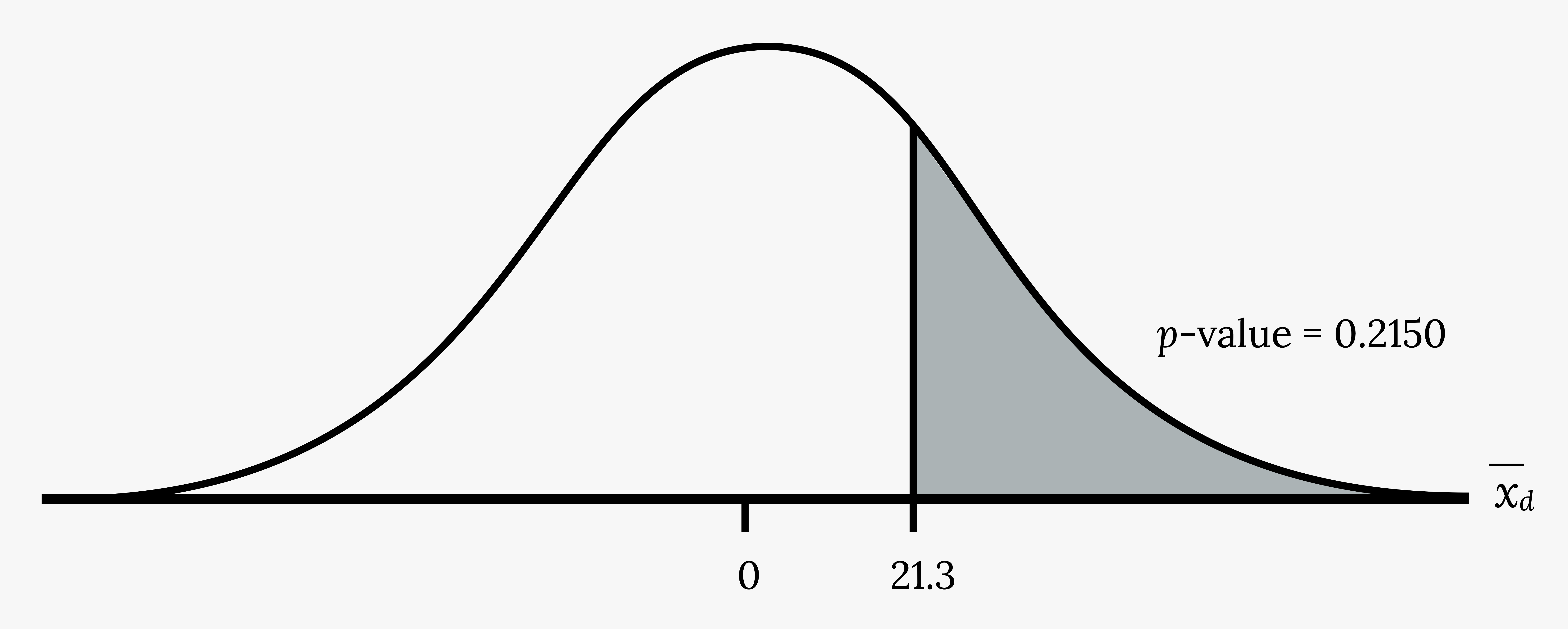

H0: μd ≤ 0

Ha: μd > 0

Calculate the p-value.

The p-value is 0.2150.

Decision:

If the level of significance is 5%, the decision is not to reject the null hypothesis, because α < p-value.

What is the conclusion?

At a 5% level of significance, from the sample data, there is not sufficient evidence to conclude that the strength development class helped to make the players stronger, on average.

Your Turn!

A new prep class was designed to improve SAT test scores. Five students were selected at random. Their scores on two practice exams were recorded, one before the class and one after. The data are recorded in the figure below. Are the scores, on average, higher after the class? Test at a 5% level.

| SAT scores | Student 1 | Student 2 | Student 3 | Student 4 |

|---|---|---|---|---|

| Score before class | 1840 | 1960 | 1920 | 2150 |

| Score after class | 1920 | 2160 | 2200 | 2100 |

Figure 7.7: SAT scores

Additional Resources

Figure References

Figure 7.1: Ali Inay (2015). variety of foods on top of gray table. Unsplash license. https://unsplash.com/photos/y3aP9oo9Pjc

Figure 7.4: Kindred Grey (2020). Reported pain p-value. CC BY-SA 4.0.

Figure 7.7: Kindred Grey (2020). Weight lifted p-value. CC BY-SA 4.0.

Figure Descriptions

Figure 7.1: Ariel picture of a table full of breakfast food including waffles, fruit, breads, coffee, etc.

Figure 7.4: Normal distribution curve showing the values zero and -3.13. -3.13 is associated with p-value 0.0095 and everything to the left of this is shaded.

Figure 7.7: Normal distribution curve with values of zero and 21.3. A vertical upward line extends from 21.3 to the curve and the p-value is indicated in the area to the right of this value.

An inactive treatment that has no real effect on the explanatory variable

The facet of statistics dealing with using a sample to generalize (or infer) about the population

Numerical data with a mathematical context

Data that describes qualities or puts individuals into categories; also known as categorical data

Very similar individuals (or even the same individual) receive two different treatments (or treatment vs. control), then the results are compared

The mean of the differences in a matched pairs design

The probability distribution of a statistic at a given sample size

The value that is calculated from a sample used to estimate an unknown population parameter